I'm an interdisciplinary designer working at the intersection of society, technology and the built environment.

Making a New City Image ... or, an Eye for AI

Understanding our machine-mediated perception of cities with computer vision cartography.

CHALLENGE

The view from above does not match the view from the ground. Maps and plans are useful tools, but they overlook important details; the individual perspective is now mediated by machines and technologies.

PROJECT

Multifaceted research into historical methods of mapping and seeing cities, coupled with a new system of computer vision and deep learning on street-level imagery to produce new cartographies based on human perception.

The view from above does not match the view from the ground. Maps and plans are useful tools, but they overlook important details; the individual perspective is now mediated by machines and technologies.

PROJECT

Multifaceted research into historical methods of mapping and seeing cities, coupled with a new system of computer vision and deep learning on street-level imagery to produce new cartographies based on human perception.

DETAILS

Harvard University Graduate School of Design (GSD) + School of Engineering and Applied Sciences (SEAS)

Master in Design Engineering thesis

Robert Pietrusko and Krzysztof Gajos, advisors

Harvard University Graduate School of Design (GSD) + School of Engineering and Applied Sciences (SEAS)

Master in Design Engineering thesis

Robert Pietrusko and Krzysztof Gajos, advisors

DETAILS

Awarded the ESRI Development Center Student of the Year Award in March 2018.

Awarded the ESRI Development Center Student of the Year Award in March 2018.

Published in the GSD’s Platform 11: Setting the Table.

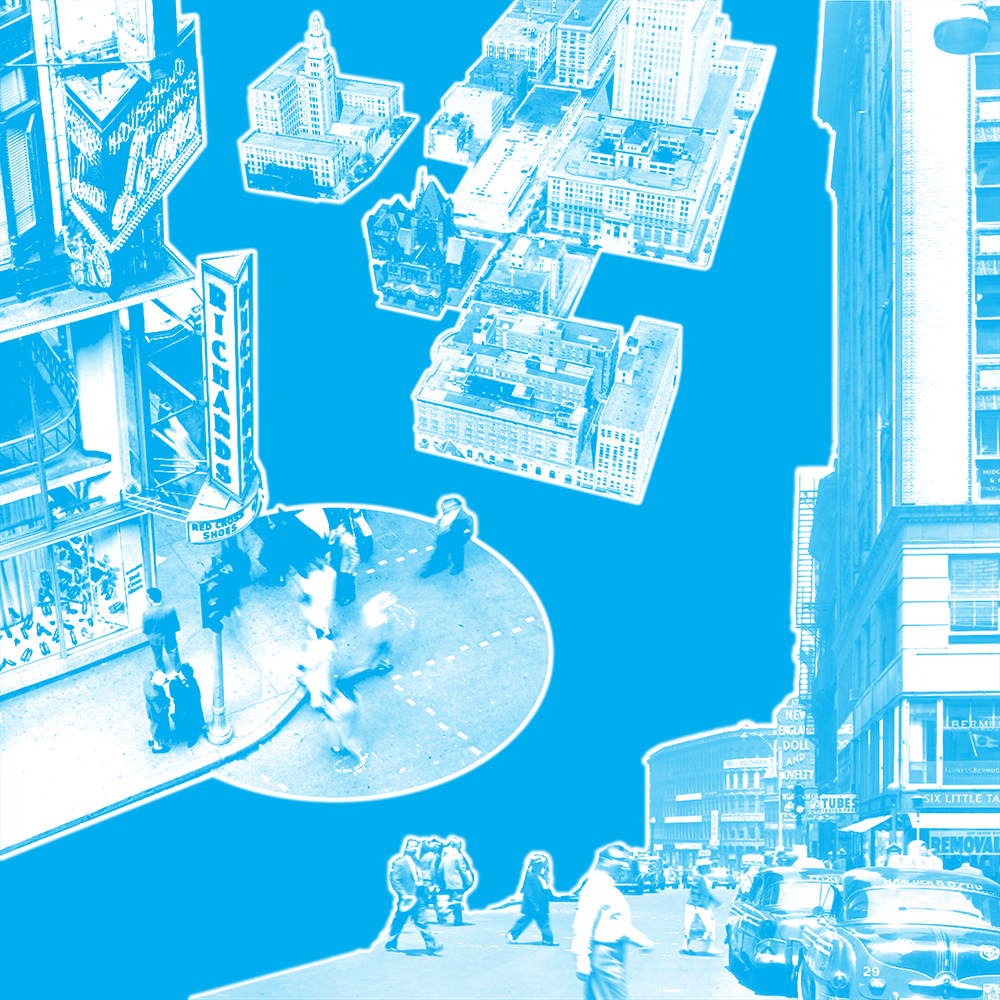

Making a New City Image explores the machine-mediated perception of urban form: new ways of seeing, understanding and experiencing cities in the information age.

Spanning the disciplines of urbanism and computer science, this project fosters a productive dialogue between the two. What might we learn about the city using computer vision, deep learning and data science? How might the history, theory and practice of urbanism — which has long viewed the city as a subject of measurement — inform modern applications of computation to cities? Most importantly, can we ensure that both disciplines understand the city as it is perceived by people?

Spanning the disciplines of urbanism and computer science, this project fosters a productive dialogue between the two. What might we learn about the city using computer vision, deep learning and data science? How might the history, theory and practice of urbanism — which has long viewed the city as a subject of measurement — inform modern applications of computation to cities? Most importantly, can we ensure that both disciplines understand the city as it is perceived by people?

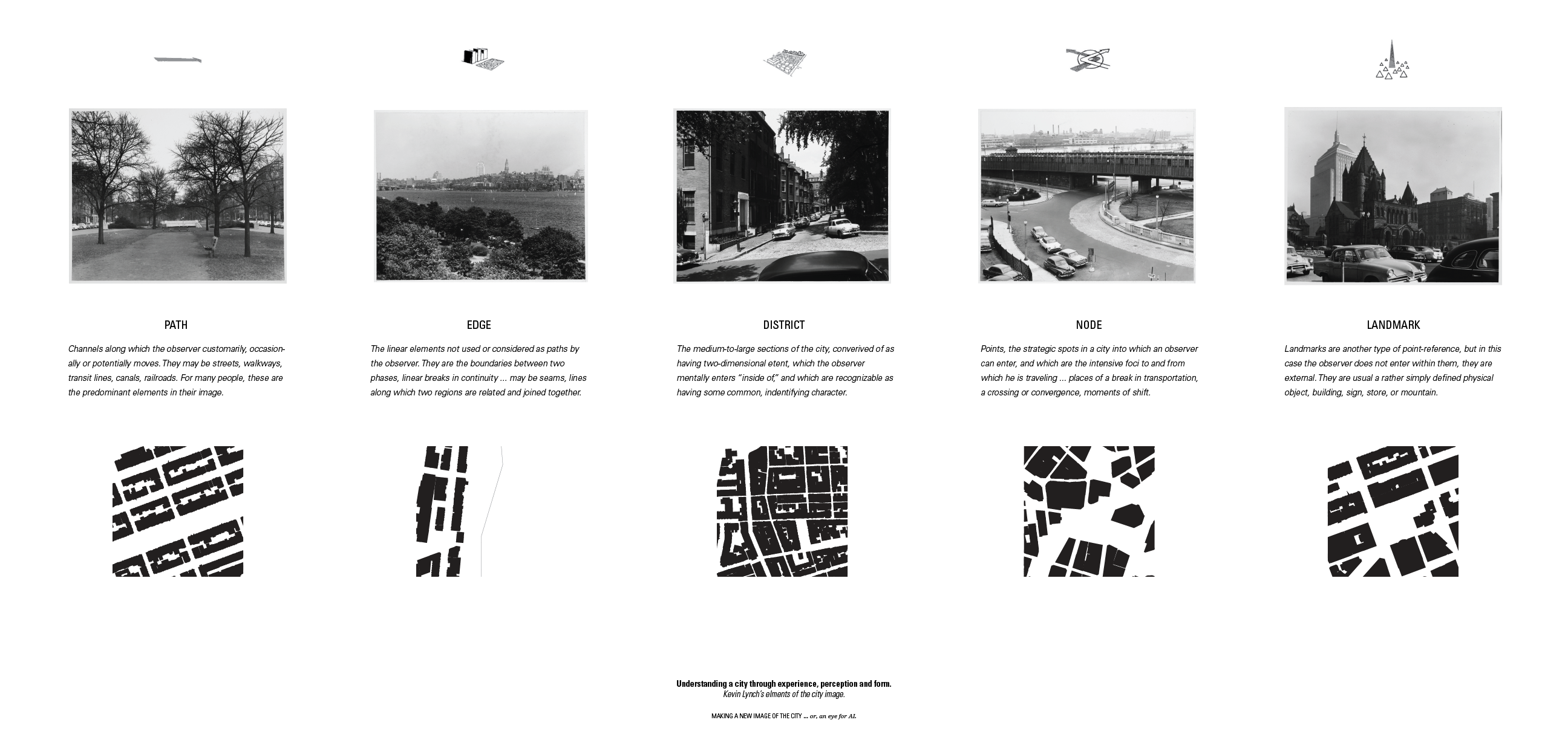

This thesis also formalizes a practice of computer vision cartography. First, it has revisited Kevin Lynch’s Image of the City: applying machine learning models to archival photographs and maps of Boston (new-city-image.io), and classifying from them Lynch’s elements of the city image. This process has also been adapted to the present day. I have created instruments for the procedural capture of street-level imagery, and the identification of types of urban environments crowdsourced from human input (bit.ly/new-city-image).

Together, these methods produce a mode of analysis that balances a comprehensive perspective at the scale of the city with a focus on the texture, color and details of urban life.

Together, these methods produce a mode of analysis that balances a comprehensive perspective at the scale of the city with a focus on the texture, color and details of urban life.

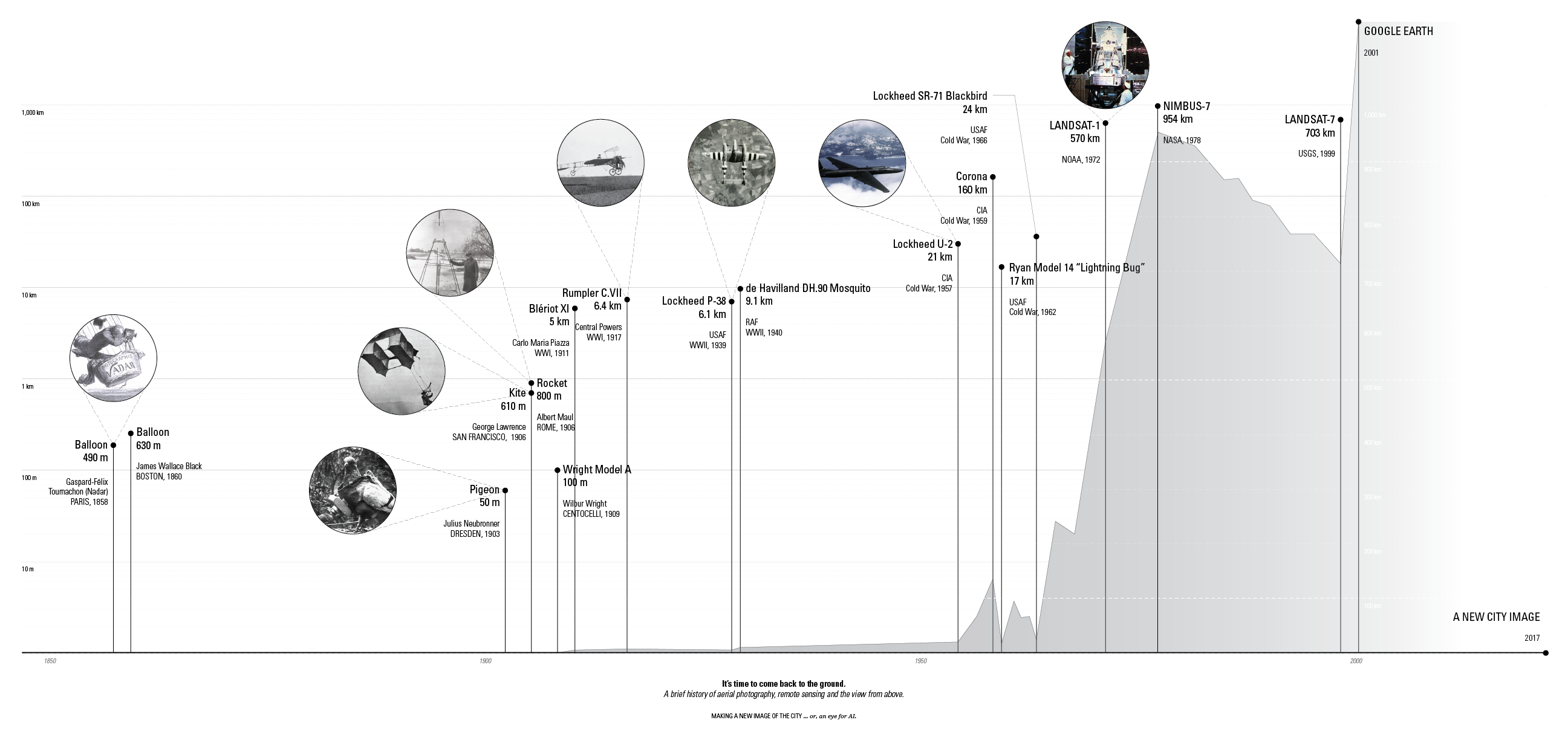

Challenging the plan perspective

The project seeks to resolve two different view of cities: the view from above and the view from the ground.

Exploring machine-mediated vision

The project also investigates the role of technology in shaping human perception of urban form.Process 1: Inputs

How can urban theory and history inform the data inputs for computational models of cities?By training a convolutional neural net to classify the elements of the city image from geocoded and labeled images from the Kevin Lynch archive (accessed via Flickr API), we can combine historical and theoretical perspective with new digital approaches.

Images from the Kevin Lynch archive were geocoded and labeled using a re-projection (created in GIS) of his original city image diagram. The resulting geocoded images can be viewed online.

A web interface was developed to allow for manual geocoding of images by comparison to Google Street View. The existing image captions were first parsed by the Google Places search API, to provide approximate locations.

The results were also visualized via projection mapping onto a milled topographic surface, with height cooresponding to building density (a first approximation of a perceptual geography in Boston).

Process 2: Instrument

How can new digital methods extend the knowledge of urbanism and design practice? By building a device to capture 360-degree imagery, associated to a GPS tag, we can build our own dataset to represent the city of Boston today.

The device in exploded axonometric. Design of the device combined digital CAD methods with 3D printing; different materials were selected for components based on desired mechanical properties.

The resulting collected dataset, captured over a week and about 100 miles. Associated map tiles were also downloaded from Mapbox, to create a concatanated input array.

Process 3: Interface

How can digital methods capture human perspective, to inform both urbanism and computation?By developing an interface for crowdsourced labeling, we can collect human perception at scale. These labels can be used to inform machine learning models, and can voluntarily be attributed to their contributors.

The first task of the crowdsourced interface asked persons to view a previously collected image, aAnd guess its location. This provides a scalar value of distance from the correct location, which can be averaged over many trials to build a sense of which views of the street are more “locatable.”

The second task of the crowdsourced interface asked persons label images according to the element of the city image, from Kevin Lynch. Participants who completed the survey could also optionally submit a memory of their own.